Microsoft

Interactive AI Agent

Role: UX Specialist | Project Manager

Duration: 6 months

Problem Statement

How can we use AI to reduce case lifecycle time?

Currently the average time for applicants to return the information needed to start their application is 3 months. How can we remove barriers to getting started in order to create overall faster case processing times?

Solution:

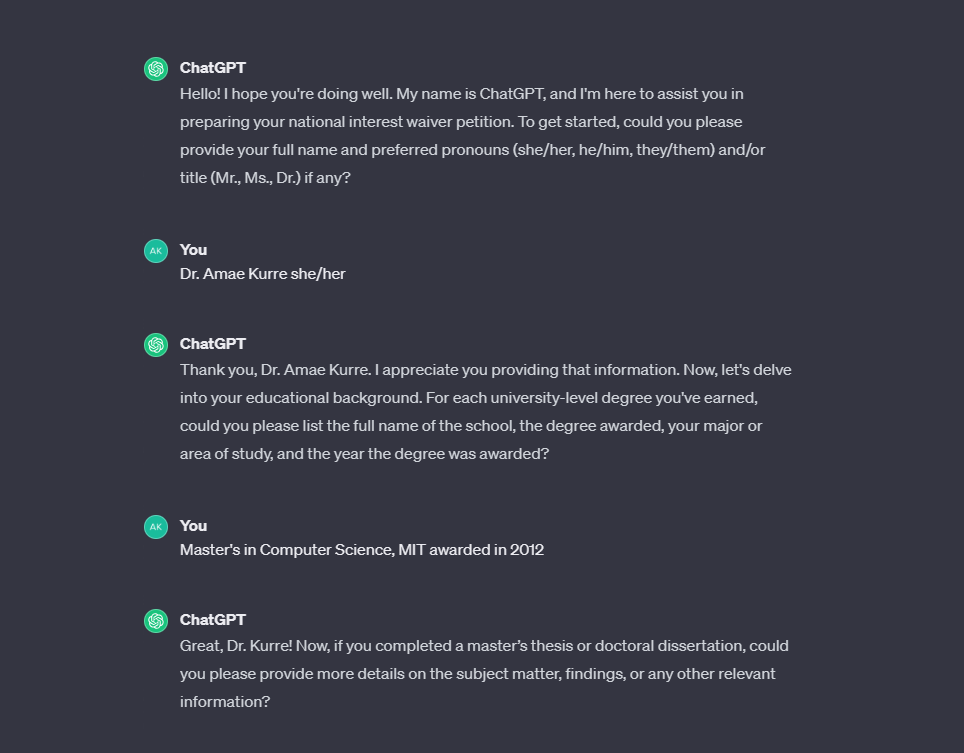

150 words input by user; 350 words output by AI

Harnessing the power of GPT4, we created an interactive AI agent that takes a few sentences of input from a user and produces a lengthy and well written output. This results in an easier and more delightful experience for the applicant. Normally case preparation involves applicants completing a 6+ page Word document questionnaire. The interactive component helps remove barriers for applicants to start the process. The output generated by AI helps the applicant better understand what makes a good response and the type of information needed for the application. Attorneys receive a large volume of content reducing the time it takes them to prepare a case.

Research

-

Verbatim feedback on an annual sentiment survey shows that questionnaires are a huge pain point for applicants

-

Data analysis of case life cycles shows there is a delay in applicants providing questionnaires even when they have previously affirmed that they are ready to move forward and understand the time required to complete this type of application.

-

Interviews and email communications with attorneys captures their pain points and demonstrates that questionnaires present an opportunity for improvement.

Interviews with applicants further support the questionnaire is a pain point for them.

-

The initial concept for the AI agent was that it would replicate a typical questionnaire and walk applicants through each question in a linear fashion. This version proved to be too rigid and limited the power of GPT; in the initial design users were not able to go back to a question and change their answers. The AI agent frequently found the user's response to be deficient and would not permit the user to move forward without providing more details, a very frustrating experience.

-

In the next version much of the back-end engineering structure of the GPT was stripped down and the team reduced the number of questions asked. In this version it was possible to jump around during the chat and change prior answers.

The team worked on prompt engineering to train the GPT on good/bad responses so that it could coach users when their response was not quite sufficient. After more than 60 iterations results were very inconsistent. The same prompt and testing parameters in different instances of the GPT would yield different results. The team concluded that it would take an exorbitant amount of time with no guarantees that the prompt engineering could be fine-tuned to consistently achieve the project goal.

-

The team had a collective aha. Why are we making users do a bunch of writing when we have an entire LLM that is very capable of writing really well? For the next iteration we tried adding a short prompt within the existing system prompt to ask the GPT to create a lengthier description for each question based on the user's input.

The results were disappointing, and the team was feeling discouraged.

-

If you ask GPT the best way to use it to do something, it will tell you. We removed all prior iterations and prompts and started from scratch with a new comprehensive system prompt created using learnings so far. This prompt focused on having the GPT create lengthy descriptions based on a few sentences of output. The results were impressive to the team and users.

Prototyping aka Prompt Engineering

User Testing

-

For the first round of testing we conducted 7 one hour moderated remote user testing sessions.

-

Users were delighted by the help the AI provided in getting them “unstuck” and helping them understand how to write to qualify for this type of application.

The app saved on average 5 - 6 hours of users’ time.

The app works best when users input 2 - 4 sentences. If users input extensive information the app is only able to summarize it and not expand upon it.

Content tends to be generic and is improved when users provide more details and ask the AI to revise its initial description.

Looking Ahead

The app is currently going through the Responsible AI process for approval to launch. There is much ardor and rigor involved in contemplating potential misuses of the tool and ensuring mitigations strategies are in place.